It may be counterintuitive. But some argue that the key to training AI systems that must work in messy real-world environments, such as self-driving cars and warehouse robots, is not, in fact, real-world data. Instead, some say, synthetic data is what will unlock the true potential of AI. Synthetic data is generated instead of collected, and the consultancy Gartner has estimated that 60 percent of data used to train AI systems will be synthetic. But its use is controversial, as questions remain about whether synthetic data can accurately mirror real-world data and prepare AI systems for real-world situations.

Nvidia has embraced the synthetic data trend, and is striving to be a leader in the young industry. In November, Nvidia founder and CEO Jensen Huang announced the launch of the Omniverse Replicator, which Nvidia describes as “an engine for generating synthetic data with ground truth for training AI networks.” To find out what that means, IEEE Spectrum spoke with Rev Lebaredian, vice president of simulation technology and Omniverse engineering at Nvidia.

Rev Lebaredian on...

- What Nvidia hopes to achieve with Omniverse

- Why today’s real-world data isn’t good enough

- Why autonomous vehicles need synthetic data

- Overfitting, algorithmic bias, and adversarial attacks

The Omniverse Replicator is described as “a powerful synthetic data generation engine that produces physically simulated synthetic data for training neural networks.” Can you explain what that means, and especially what you mean by “physically simulated”?

Rev Lebaredian

Nvidia

Rev Lebaredian: Video games are essentially simulations of fantastic worlds. There are attempts to make the physics of games somewhat realistic: When you blow up a wall or a building, it crumbles. But for the most part, games aren’t trying to be truly physically accurate, because that’s computationally very expensive. So it’s always about: What approximations are you willing to do in order to make it tractable as a computing problem? A video game typically has to run on a small computer, like a console or even on a phone. So you have those severe constraints. The other thing with games is that they’re fantasy worlds and they’re meant to be fun, so real-world physics and accuracy is not necessarily a great thing.

With Omniverse, our goal is to do something that really hasn’t been done before in real-time world simulators. We’re trying to make a physically accurate simulation of the world. And when we say physically accurate, we mean all aspects of physics that are relevant. How things look in the physical world is the physics of how light interacts with matter, so we simulate that. We simulate how atoms interact with each other with rigid-body physics, soft-body physics, fluid dynamics, and whatever else is relevant. Because we believe that if you can simulate the real world closely enough, then you gain superpowers.

What kind of superpowers?

Lebaredian: First, you get teleportation. If I can take this room around me and represent it in a virtual world, now I can move my camera around in that world and teleport to any location. I can even put on a VR headset and feel like I’m inside it. And if I can synchronize the state of the real world with the virtual one, then there’s really no difference. I might have sensors on Mars that ingest the real world and send over a copy of that info to Earth in real time—or 8 minutes later or whatever it takes for the speed of light to travel from Mars. If I can reconstruct that world virtually and immerse myself in it, then effectively it’s like I’m teleporting to Mars 8 minutes ago.

And given some initial conditions about the state of the world, if you can simulate accurately enough, then you can potentially predict the future. Say I have the state of the world right now in this room and I’m holding this phone up. I can simulate what happens the moment I let go and it falls—and if my simulation is close enough, then I can predict how this phone is going to fall and hit the ground. What’s really cool about that is you can change the initial conditions and do some experiments. You can say, What can alternate futures look like? What if I reconfigure my factory or make different decisions about how I manipulate things in my environment? What would these different futures look like? And that allows you to do optimizations. You can find the best future.

Okay, so that’s what you’re trying to build with Omniverse. How does all this help with AI?

Lebaredian: In this new era of AI, developing advanced software is no longer something that just a grad student with a laptop can do. It requires serious investment. All the most advanced algorithms that mankind will develop in the future are going to be trained by systems that require a lot of data. That’s why people say data is the new oil. And it seems like the big tech companies that collect data have a natural advantage. But the truth is that for most of the AI that we’re going to create in the future, none of the data we have collected is that useful.

I noticed it when we did a demo for [the conference] SIGGRAPH 2017. We had a robot that could play dominoes, and we had multiple AI models that we had to train. One of the basic ones was a computer-vision model that could detect the dominoes that were on the table, tell you their orientation, and then tell you how many pips were on each domino: one, five, six, or whatever.

Surely Google would have all the image data you need to train such an AI.

Lebaredian: You can search Google images and you’ll find lots of pictures of dominoes, but what you’ll find is, first of all, none of them are labeled. A human has to label what each domino is and the side of each domino, and that’s a whole bunch of manual labor. But even if you get past the labeling, you’ll find that the images don’t have much diversity. We needed our algorithm to be robust to different lighting conditions because we were going to train it in our lab, but then take it to the show floor at SIGGRAPH. The cameras and sensors we used might also change, so the conditions around those could be different. We wanted the algorithm to work with any type of dominoes, whether they’re plastic or wood or whatever material. So even for this really simple thing, the necessary data just didn’t exist. If we were to go collect that data, we’d have to buy dozens or maybe hundreds of different dominos sets, set up different lighting conditions and different sensors and all of that. So, back then, we quickly coded off in a game engine a random domino generator that randomized all of that stuff. And overnight we trained a model that could do this robustly, and it worked in the convention center with different cameras.

That’s one simple case. For something more complex like self-driving cars or autonomous machines, the amount of data that we need, and the accuracy and diversity of that data, is just impossible to get from the real world. There’s really no way around it. Without physically accurate simulation to generate the data we need for these AIs, there’s no way we’re going to progress.

With Omniverse Replicator, are customers getting a one-size-fits-all synthetic data generator? Or are you tailoring it for different industries?

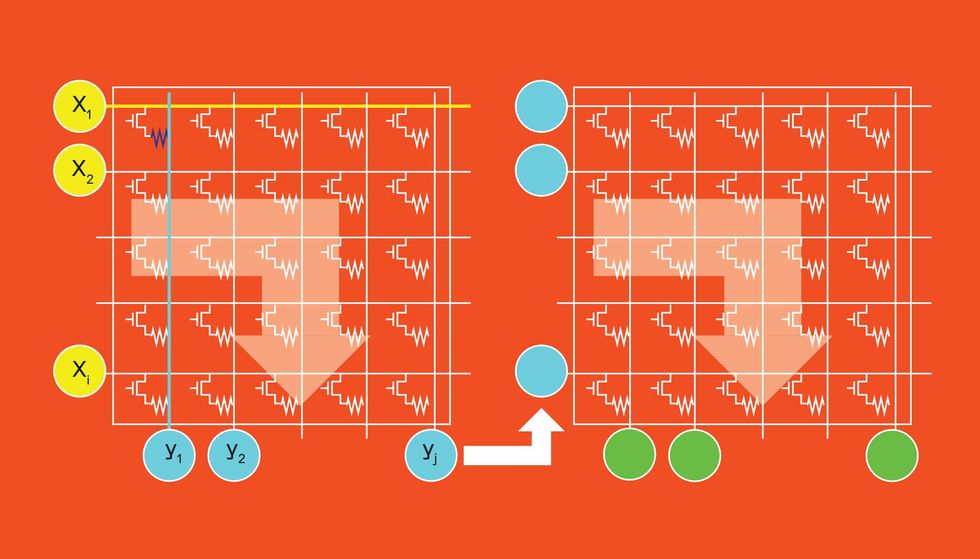

Lebaredian: What we’re building with Omniverse is a very general development platform that anyone can take and customize for their particular needs. Out of the box you get multiple renderers, which are simulators of the physics of light and matter. You get a spectrum of them that let you trade off accuracy for speed.

We have a bunch of ways to bring in 3D data as inputs to Omniverse Replicator to generate the data that you need. For pretty much everything that’s man-made these days, there’s a 3D virtual representation of it somewhere. If you’re designing a car, a phone, a building, a bridge, or whatever, you use a CAD tool. The problem is that all these tools speak different languages. The data is in different formats. It’s very hard to combine them and build a scene that has all those constituent parts.

With Omniverse, we’ve gone through the trouble of trying to connect all of these existing tools and harmonizing them. We built Omniverse on top of a system called universal scene description that was originally developed by Pixar and later open-sourced. We think USD is to virtual worlds as HTML is to Web pages: It’s a common way to describe things. We built a lot of tools around USD to let users transform the data, modify it, randomize things. But the source data can come from virtually anywhere because we have connectors to all the different tools that are relevant.

Can you give me an example of an industry that would use Replicator to make synthetic data for AI training?

Lebaredian: We’ve shown the example of autonomous vehicles. There’s a lot of money going into figuring out how to make vehicles drive themselves, and synthetic data is becoming a major part of training the AI systems. We’ve already done some specialization within Omniverse Replicator for this domain: We have big outdoor worlds with roads and lanes and cars and pedestrians and street signs and all that kind of stuff.

We’ve also done some specialization for robotics. But if we don’t support your domain out of the box, since it’s a tool kit, you can take it and do what you like with it. People have many paths to bring in their own 3D data or get data to construct virtual worlds. There are libraries and third-party 3D asset providers out there.

NVIDIA Omniverse Replicator For DRIVE Sim—Synthetic Data Generation www.youtube.com

For an autonomous vehicle company, an advantage of generating synthetic data is that it could train its vehicles on dangerous conditions, right? It can put in snow and ice, hard turns, that kind of thing?

Lebaredian: They can change day and night conditions and position pedestrians and animals in dangerous situations that you wouldn’t want to construct in the real world. We don’t want to put humans or animals in perilous situations in real life, but I sure do want my autonomous vehicle to know how to react to these types of fringe situations. So if we can train them in the virtual world where it’s safe first, we get the best of both worlds.

So this synthetic data can be used in AI training as “ground truth data” with built-in labels that are superaccurate. But is that the best training strategy? These AI systems often need to operate in the world with incomplete and imperfect information.

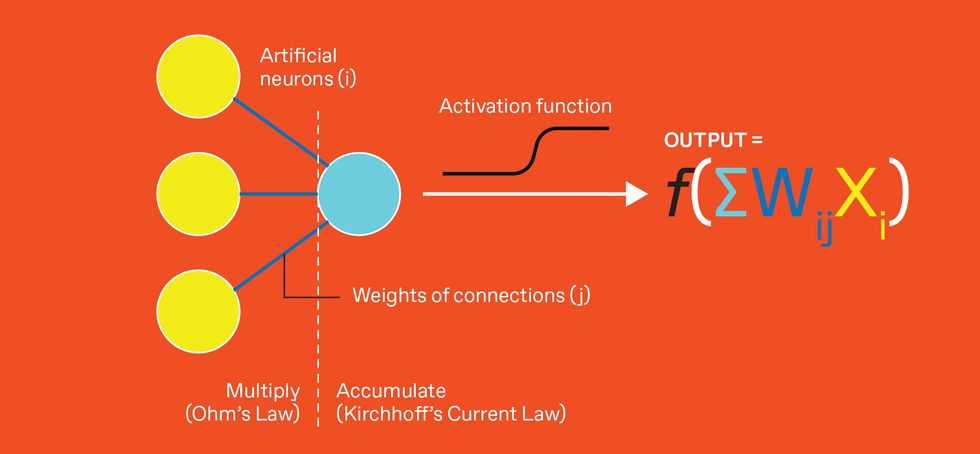

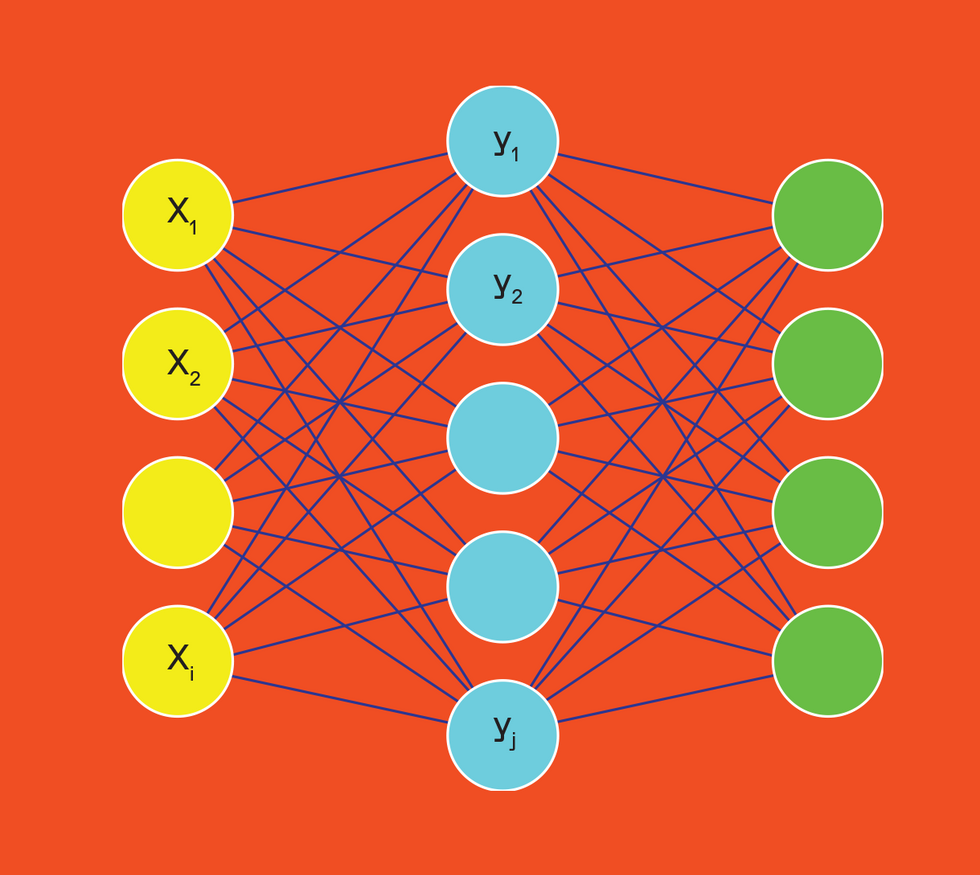

Lebaredian: It’s good for the training part. The way most AI is created today is through a type of learning called supervised learning. In the example of a neural network that can tell the difference between a cat and a dog, you first train it on pictures of cats and dogs that are labeled: This is a cat and this is a dog. It learns from those examples. Then you go apply that network on new images that aren’t labeled, and it will tell you what each one is.

For example, in autonomous vehicles you want your car to know, by looking through its sensors at the world, the relative 3D positions of all of the cars and pedestrians around it. But it’s just getting a 2D image that’s nothing but pixels; there’s no information about it. So if you’re going to train a network to infer that 3D information, you first have to draw a box around things in 2D and then you have to tell it, ‘Here’s how far away it is based on the particular lens that was used with that sensor.’ But if we synthesize the data in Omniverse, we have all of that 3D information at full physical accuracy. We can provide exact labeling without the errors that a human would introduce into the system. So the resulting neural network that we train is going to be smarter and more accurate.

Is overfitting a problem in this context? Is there a danger that a system trained with synthetic data would perform well on synthetic data, but fail in the real world?

Lebaredian: Synthetic data is actually a great way to solve for the overfitting problem, because it’s much easier for us to provide a diverse data set. If we’re training a network to recognize people’s facial expressions, but we only train it on Caucasian males, then we’ve overfit to Caucasian males and it will fail when you give it more diverse subjects. Synthetic data doesn’t make that worse. But with synthetic data it’s easier for us to create diversity of data. If I’m generating images of humans and I have a synthetic data generator, that allows me to change the configurations of people’s faces, their skin tone, eye color, hairstyle, and all of those things.

It seems like synthetic data could help with the big problem of algorithmic bias, since one of the sources of algorithmic bias is bias in data sets used to train AI systems. Can we use synthetic data to train AIs in the unbiased world that we would prefer to live in, as opposed to the world we actually live in?

Lebaredian: We’re synthesizing the worlds that our AIs are born in. They are born inside a computer and they’re just trained on whatever data we give them. So we can construct ideal worlds with the diversity that we want, and our AIs can be better for it. By the time they’re done, they are more intelligent than anybody we have out here in the real world. And when we put them in the real world, they behave better than they would have if they were only trained on what they see out here.

So what are the pitfalls to using synthetic data? Is it susceptible to adversarial attacks?

Lebaredian: Adversarial attacks, similar to overfitting problems, are not something that’s unique to synthetic data versus any other kind of data. The solution is to just have more data and better data.

The problem with synthetic data is that generating good synthetic data is hard. It requires you having a great simulator like Omniverse and one that is physically accurate so it can match the real world well enough. If we create a synthetic data generator that makes images that look like cartoons, that’s not going to be good enough. You wouldn’t want to put a robot that only knows how to interpret cartoon worlds in a hospital where it’s going to work with the elderly and children. That would be a scary thing to do. You need your simulator to be as physically accurate as possible to make use of this. But it is an extremely difficult problem.

Eliza Strickland is a senior editor at IEEE Spectrum, where she covers AI, biomedical engineering, and other topics. She holds a master's degree in journalism from Columbia University.